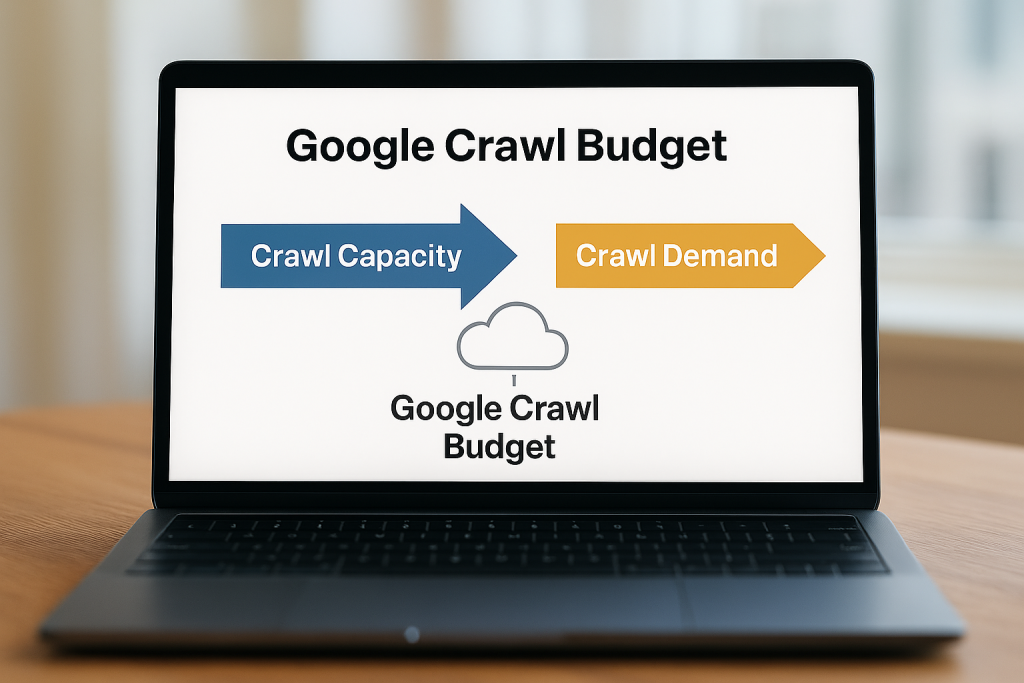

In search engine optimisation (SEO), ‘crawl budget’ is a critical concept. Understanding your SEO crawl budget can help ensure that search engines efficiently discover, index, and rank your website’s content. For businesses aiming to maximise visibility, managing crawl budget effectively is a key step toward stronger search performance.

What Is Crawl Budget in SEO?

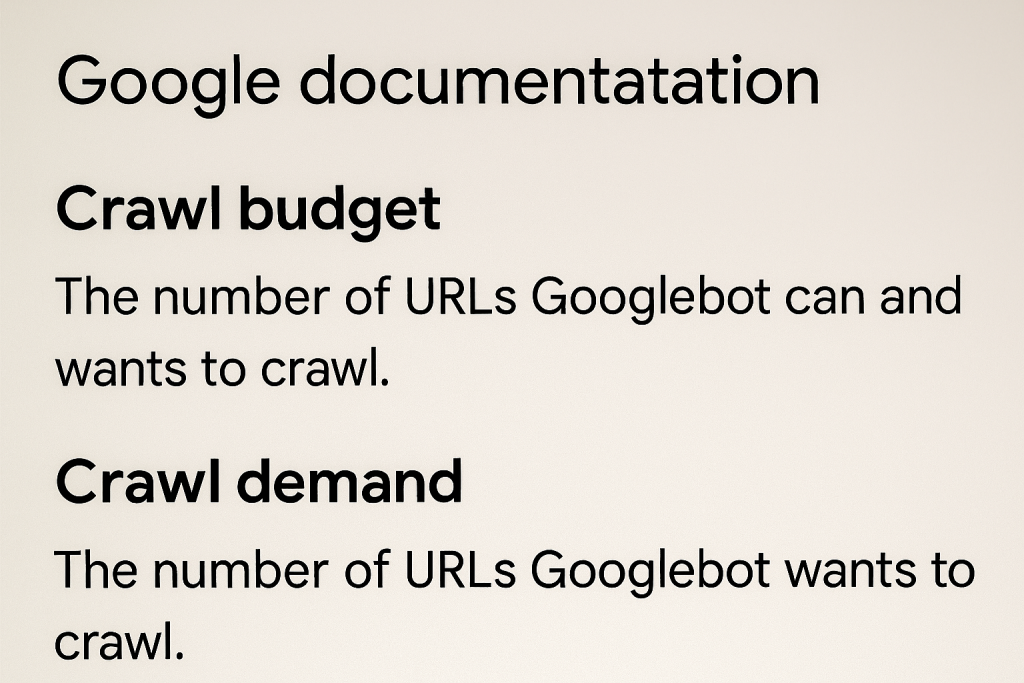

A ‘crawl budget’ refers to the number of pages on your website that search engines are willing and able to crawl within a given timeframe. There are a vast number of sites on the web, and search engines don’t have unlimited resources; thus, they can’t be on top of changes made on every single site at all times.

As a result, search engines assign a crawl budget to websites, to prioritise their crawling efforts and use their crawling resources efficiently. However, Google does clarify that crawl budget is not something most publishers and websites need to worry about. If your site has fewer than 1000 links, it will most likely be crawled efficiently.

To understand crawl budget, it helps to look at the three key steps of search engine visibility:

Crawling:

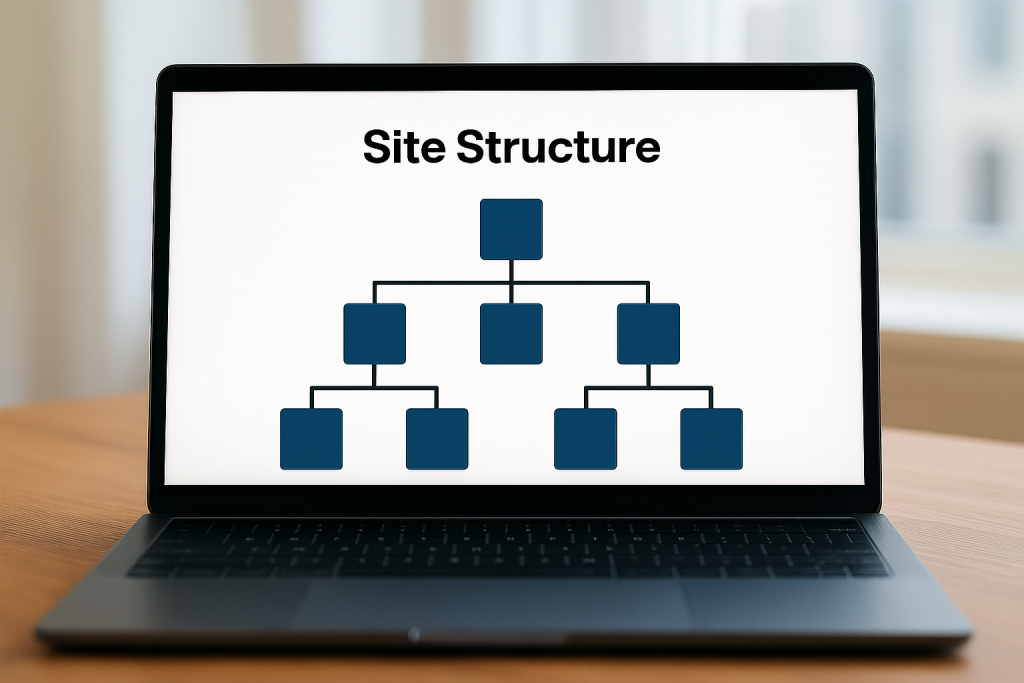

Search engine ‘crawler bots’ (like the ones Google uses) scan your website to discover pages and links. This process is the first step in making your content visible online. Bots follow internal links, sitemaps, and external references to navigate through your site. How efficiently they can perform this crawl depends on your crawl budget. If web crawlers (or “spiders”) spend too much of their time looking at duplicate pages, broken links, or irrelevant content, they may miss the pages that matter most.

Indexing:

Once crawled, pages are stored in the search engine’s index. An index is a huge database of all content web crawlers have discovered. This database is what search engines draw from when responding to user queries. If a page isn’t indexed, then it cannot appear in search results, so it is a highly important task. However, indexing is also impacted by crawl budget, because only the pages that bots successfully crawl can be considered for inclusion in the index.

Search Engine Ranking:

Indexed pages are then evaluated against search queries. Search engines use numerous factors, such as relevance, authority, and user experience, in determining where your page ranks. Without proper crawling and indexing, ranking cannot happen. By managing crawl budget effectively, you help search engines move smoothly through these stages, ensuring your content is crawled, indexed, and ultimately ranked where your audience can find it.

How Does Crawl Budget Affect SEO

An SEO crawl budget directly impacts rankings because it determines how quickly and comprehensively your site is indexed. When managed effectively, your crawl budget ensures that search engines prioritise your most valuable content, index it quickly, and position it competitively in rankings. For businesses, this means stronger online visibility, faster discovery of new content, and a more efficient path to reaching your target audience.

So, to recap, the key reasons as to why monitoring and using your crawl budget effectively is essential for SEO success are:

Indexation speed:

Pages that haven’t been crawled cannot appear in search results. A well-managed crawl budget ensures that important pages are discovered, understood, and included in search results as quickly as possible.

Visibility of new content:

Fresh content may take longer to rank if the crawl budget is mismanaged. By directing crawl resources toward new or updated pages, you help search engines get to grips with new information faster.

Competitive advantage:

Websites that manage crawl budget effectively often outperform competitors in search visibility. By ensuring that your most important pages are crawled and indexed, you gain an edge in the fight to rank for high-value keywords.

How can I Optimise my SEO Crawl Budget?

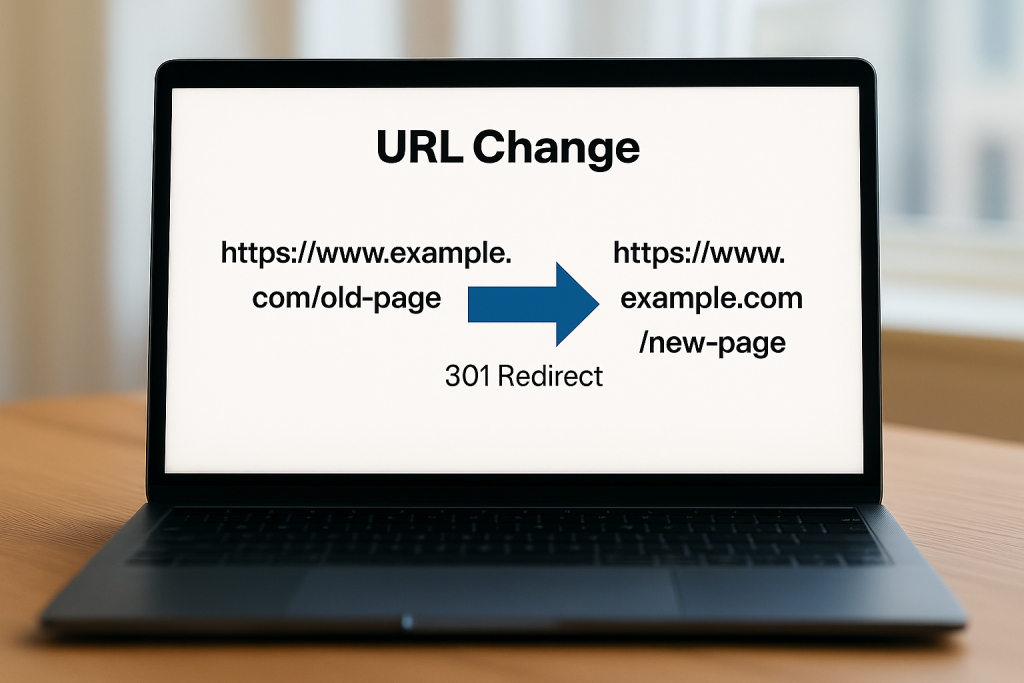

By improving crawl efficiency, you ensure that bots spend their limited resources on high-value content, rather than wasting time on errors or irrelevant URLs. Best practices here include:

Fixing Broken Links and Avoid Redirect Chains | Strengthening Internal Linking | Removing Duplicate or Thin Content |

| Broken links and long redirect chains waste crawl budget by sending bots to dead ends, or on lengthy and unnecessary detours. Regularly auditing your site for link errors ensures that crawlers reach the right pages quickly. | Internal links help bots navigate your site efficiently. A clear linking structure ensures that crawl budget flows naturally toward priority pages, improving their chances of being indexed and ranked. | Duplicate pages, near-identical content, or thin pages holding little value for the user can dilute crawl efficiency. Consolidating and eliminating instances of duplicate content, and focusing on high-quality pages helps search engines prioritise what’s most important. |

Where can I check my crawl budget?

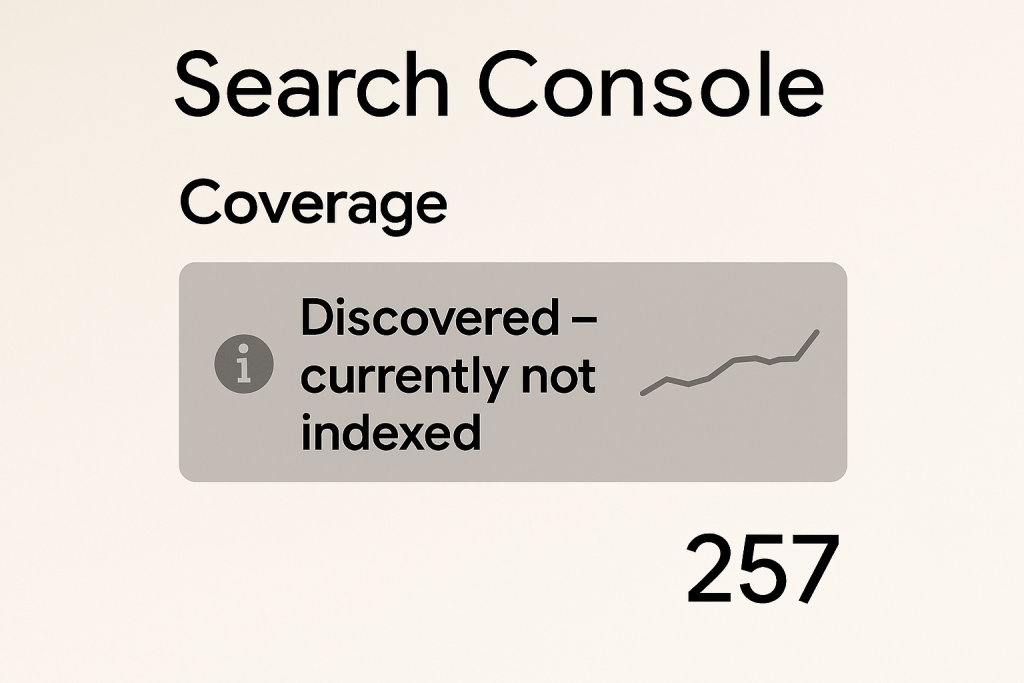

In Google Search Console, one of the tools available to you is your crawl stats, which can aid you in understanding and analysing how Google crawls your pages. It also reports on metrics such as crawl requests, response times, and server availability.

SEO Crawl Budget in Summary

Crawl budget may sound like an important technical SEO detail at first, but the truth is that it plays a pivotal role in how search engines discover, index, and rank your website’s pages. By managing your site’s crawl budget, you ensure that bots focus on your most valuable content, speeding up indexation, improving visibility for new pages, and reinforcing your site’s authority signals. In short, crawl budget optimisation can serve as the foundation that supports stronger rankings and sustainable online growth.

Our Case Studies

Explore our case studies to see how Seek Marketing Partners has transformed businesses like yours through our proven suite of SEO strategies and services.

Get our Help

At Seek Marketing Partners, we help businesses translate complex SEO concepts into plain English, and measurable results. Our team specialises in data-driven strategies, efficiency, and ensuring that every page contributes to stronger rankings and improved digital performance. Partner with Seek Marketing Partners today to maximise and elevate your SEO strategy.